Project Proposal (Updated)

Notes on Modification of Project Idea

At the initial stage of the project, we decided to implement a parallel version of selected heavily-used functions of the Pandas library using CUDA on GPU. Some example functions are sorting and aggregation. However, after doing extensive research and initial test, we realized that there are many limitations that constrains the amount of parallel optimizations we could do. At the same time, while we research on the topic, we found out that K-means and K-means++ might be a more interesting idea to pursue. Therefore, we modified this proposal to reflect what we will be working on from now on. In the project Checkpoint, we will give a detailed explanation on what we have done so far before and after we changed topic.

At the initial stage of the project, we decided to implement a parallel version of selected heavily-used functions of the Pandas library using CUDA on GPU. Some example functions are sorting and aggregation. However, after doing extensive research and initial test, we realized that there are many limitations that constrains the amount of parallel optimizations we could do. At the same time, while we research on the topic, we found out that K-means and K-means++ might be a more interesting idea to pursue. Therefore, we modified this proposal to reflect what we will be working on from now on. In the project Checkpoint, we will give a detailed explanation on what we have done so far before and after we changed topic.

Project Proposal URL: https://parallelpandas.weebly.com/proposal.html

Summary

We would like to implement a parallel version of K-means algorithm powered by K-means++ initialization algorithm.

We would like to implement a parallel version of K-means algorithm powered by K-means++ initialization algorithm.

Background

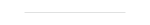

K-means is a classic clustering algorithm that has wide applications. It is relatively intuitive to understand and implement, and the result clusters are rather accurate. From the wikipedia page, K-means algorithm has the following two steps:

K-means is a classic clustering algorithm that has wide applications. It is relatively intuitive to understand and implement, and the result clusters are rather accurate. From the wikipedia page, K-means algorithm has the following two steps:

There are mainly two drawbacks of K-means algorithm:

1. Without good initialization, K-means algorithm is likely to return a sub-optimal clustering result;

2. The algorithm is iterative until convergence, therefore it usually takes quite a long time to run.

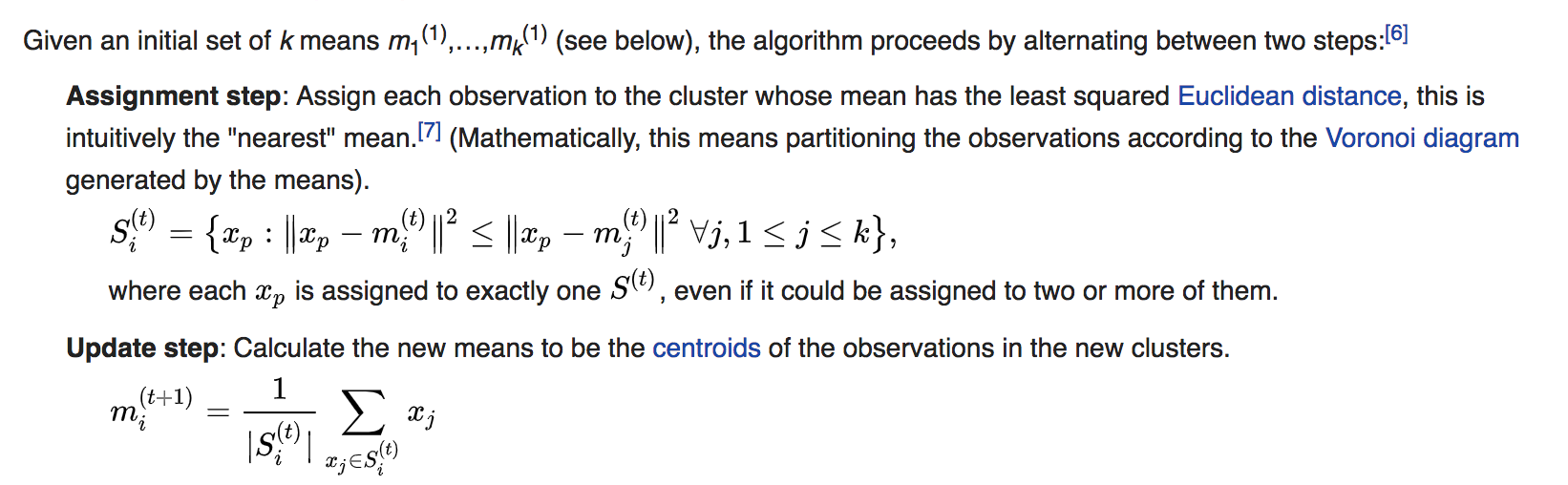

In order to tackle the first problem, an initialization algorithm called K-means ++ is designed, which iteratively select each initial point using the following steps (from Wikipedia page):

Our goal is to parallelize the entire algorithm consists of both K-mean++ and K-mean clustering algorithm.

Challenges

There are a few challenges in this project:

1. The entire algorithm (including both K-mean and K-mean++) is innately sequential. Especially K-means++, in each round, only 1 point is generated after huge computation; Therefore, in order to improve performance, we might need to make tradeoffs on accuracy, or look deep into other possible optimization areas such as memory access;

2. The dataset fed into the algorithm can be very huge (in terms of Gigabytes). Therefore, storing the data and intermediate results might be challenging, especially if we use share memory systems;

3. The data has large variations. For example, the dimension of each datapoint might be small (like 2), or very large (like 5000); also, number of clusters might also vary a lot. We need to ensure that under different set of parameters, our program can always perform well. Therefore, we might need to employ different strategies (or even use different platforms) for different input size and requirement.

Resources

Goals and Deliverables

Plan to achieve

Hope to achieve

Demo

Platform Choice

We will mostly use MPI on the Latedays machines for our parallelization. If we have finished the MPI optimizations ahead of schedules, we would also look into using Cuda on GPU to further speeding up our program.

Schedule

(Updated to show the schedule for new project idea only; detailed half-week schedule is in the Checkpoint report)

There are a few challenges in this project:

1. The entire algorithm (including both K-mean and K-mean++) is innately sequential. Especially K-means++, in each round, only 1 point is generated after huge computation; Therefore, in order to improve performance, we might need to make tradeoffs on accuracy, or look deep into other possible optimization areas such as memory access;

2. The dataset fed into the algorithm can be very huge (in terms of Gigabytes). Therefore, storing the data and intermediate results might be challenging, especially if we use share memory systems;

3. The data has large variations. For example, the dimension of each datapoint might be small (like 2), or very large (like 5000); also, number of clusters might also vary a lot. We need to ensure that under different set of parameters, our program can always perform well. Therefore, we might need to employ different strategies (or even use different platforms) for different input size and requirement.

Resources

- CMU Latedays to run multi-processor programs using MPI (and potentially Cuda).

- Source code on Github.com with implementation of single-processor K-means and K-means ++ algorithm.

- UCI machine learning repository to obtain various datasets;

Goals and Deliverables

Plan to achieve

- Make use of MPI to effectively speed up K-means algorithm;

- Use MPI, and other performance optimization strategies to speed up the sequential initialization K-means++ algorithm;

- Vary the input size such as dimension of the data and number of clusters, and ensure that the algorithm have good speedup under each scenario.

Hope to achieve

- Since the pairwise distance calculation is pretty computational heavy and rather independent, we would like to look into possibilities in incorporating Cuda on GPU to our MPI-optimized algorithm, and speed up the computations.

Demo

- We would display a performance graph showing the difference in performance between our parallel implementation and the original sequential version of the algorithm under different problem size;

- We would provide a live demo on how the clustering algorithm performs; Hopefully we could provide a visual representation (such as a GIF) to show graphically how the algorithm is developed on 2-dimensional dataset.

Platform Choice

We will mostly use MPI on the Latedays machines for our parallelization. If we have finished the MPI optimizations ahead of schedules, we would also look into using Cuda on GPU to further speeding up our program.

Schedule

(Updated to show the schedule for new project idea only; detailed half-week schedule is in the Checkpoint report)

- Week 1: Optimize K-means part of the algorithm using MPI, and look into customizing optimization strategies given different input size;

- Week 2: Optimize K-means ++ part of the algorithm using MPI, and look into customizing optimization strategies given different input size;

- Week 3: Finish developing different optimization strategies for different problem size and cluster numbers;

- Week 4: Complete report and prepare for demo and presentation;